In the previous series of posts, we introducedthe concept and discovery of Ki-67, its role as a prognostic biomarker in breast cancer, and its predictive value for chemotherapy response. Now, in this issue, let’s explore the research conducted by the International Ki-67 in Breast Cancer Working Group (IKBCWG) to advance the standardization of Ki-67index assessment methods!

International Ki-67 in Breast Cancer Working Group (IKBCWG)

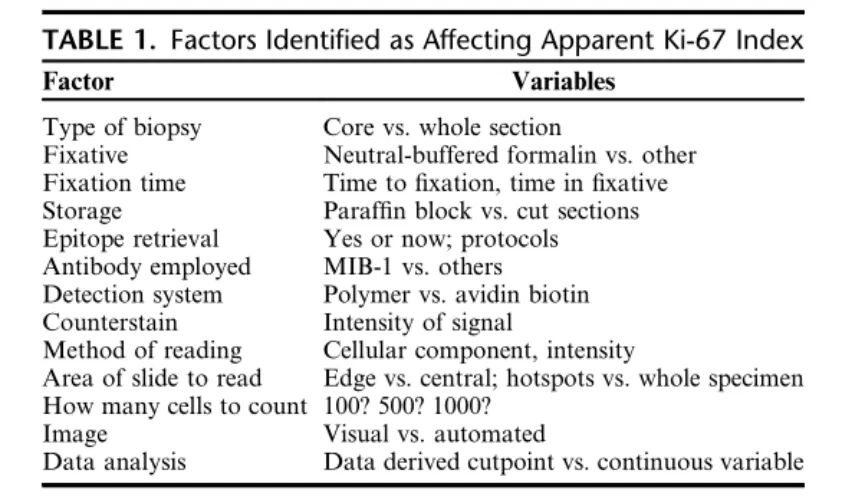

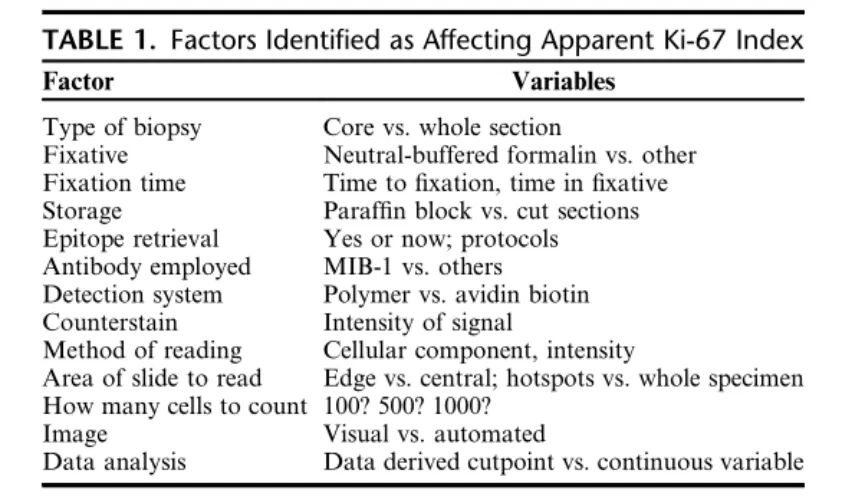

A core issue identified in many of the published studies mentioned above is the lack of standardization in Ki-67 index assessment methods. The IKBCWG was established in 2009 to address the lack of standardization in Ki-67 assessment methods in breast cancer, including all pre-analytical and analytical variables. Additionally, the IKBCWG aims to provide a central repository for the pathology community to evaluate current research related to Ki-67 and clinical practices involving Ki-67. Table 1 lists the variables identified by the IKBCWG that potentially impact the validity and reproducibility of Ki-67 assessment in breast cancer.

Table 1. Factors identified as affecting the characterization of Ki-67 index

Similar to other biomarkers in breast cancer (such as ER, PR, and HER-2), the question of which type of specimen (core needle biopsy or surgical resection) to use for Ki-67 assessment has been raised. In reviewing literature from studies published over the past decade, Kalvala et al. demonstrated that the concordance of results between these two specimen types from the same patient ranged from 70.3% to 92.7%, with Ki-67 assessment methods again determined by each laboratory’s unique approach. Kalvala and colleagues concluded that it was not possible to determine which specimen is preferred, but the more important conclusion was the urgent need for consensus on Ki-67 testing. However, a more definitive study by the IKBCWG on 120 breast cancer cases, using quantitative image analysis of Ki-67 index, demonstrated that core needle biopsy tissue analysis yielded higher (~5%) Ki-67 scores compared to corresponding resection specimens. Furthermore, it has been suggested that this discrepancy is related to pre-analytical factors such as fixation.

Others have studied the impact of such pre-analytical factors. In a 2016 study using HT-69 xenografts in mice, prolonged formalin fixation time had a significant effect on Ki-67 index for excised tumors. In fact, when fixation time was extended from 24h to 48h, the index decreased from 15% to 5%. A similar decrease in Ki-67 index was found in a study of human breast cancer surgical specimens, with the magnitude of reduction increasing with longer fixation times. It should be noted that when observing changes in ER or HER-2 expression with fixation time in the same specimens, no parallel decline in IHC signal was observed. They also studied the effect of inadequate fixation (e.g., 3h), which led to a sharp drop in Ki-67 index (but again, this phenomenon was not observed in ER or HER-2 IHC studies on the same samples). However, in another study using core needle breast biopsies, no effect of brief fixation on Ki-67 index was found, with specimens fixed for 45 to 90 minutes showing high overall concordance with routinely fixed specimens. Clearly, fixation time is less critical for core needle biopsies than for resection specimens, providing further reason to recommend core needle biopsy specimens.

How to calculate the Ki-67 index

Various laboratories worldwide employ four main methods to calculate the Ki-67 index. These include: (a) “eyeballing” estimation; (b) visual counting using a microscope or viewing software (real-time counting); (c) manual counting of camera-captured or digital images; (d) automated (i.e., image analysis-based) counting methods.

The “eyeballing” of Ki-67 index generally refers to estimating the percentage of Ki-67-positive tumor cells, typically performed under a 10× objective lens, and is still widely used; literature documents that eyeballing is a more time-saving method than formal counting. However, many published studies also indicate that the eyeballing method for Ki-67 assessment is inaccurate and poorly reproducible. For example, in a study of 27 gastrointestinal neuroendocrine carcinomas, where Ki-67 assessment is also clinically required for tumor classification, comparing “eyeballing” of Ki-67 index with manual and digital counting of 2000 cells, “eyeballing” was shown to be an inappropriate method when compared to quantified Ki-67 fractions, with correct scores in only 55% to 93% of cases. In another comparison of Ki-67 index determination in breast cancer, “eyeballing” correlated very poorly with actual counting methods.

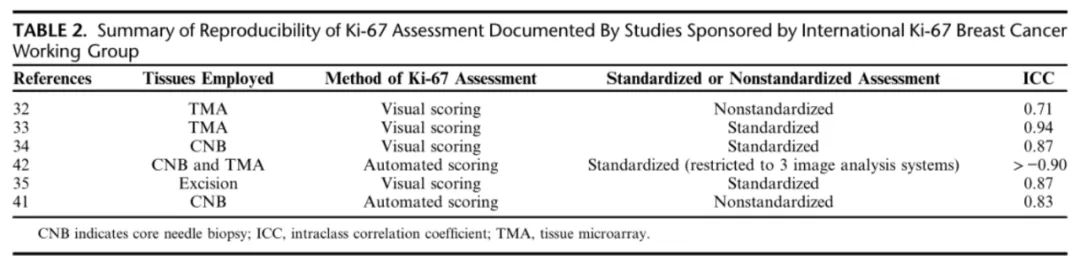

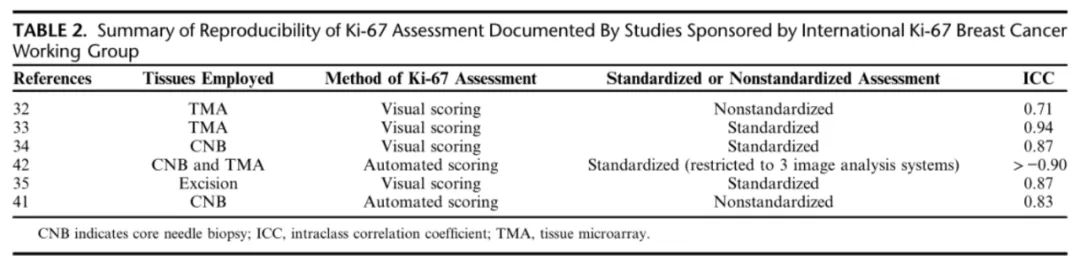

As part of a systematic evaluation of Ki-67 counting methods, in the first publication of the IKBCWG, eight different laboratories (each with a documented history of publishing one or more Ki-67 studies) evaluated 100 breast cancer samples using their own Ki-67 assessment methods in the context of a single core tissue microarray (TMA), and results were compared among this group of “experts.” They used a wide range of methods, some employing “eyeballing,” others “real-time counting.” The intraclass correlation coefficient (ICC) was used to assess inter-laboratory variability (good reliability generally refers to ICCs between 0.6-0.8, excellent reliability to ICCs above 0.8). The initial assessment of this group of experiments hosted by the IKBCWG showed calculated ICCs ranging from 0.59 (when slides were locally immunostained) to 0.71 (when slides were centrally immunostained). The IKBCWG concluded that, given this variability, the clinical utility of Ki-67 assessment in breast cancer remains elusive, and suspected that differences in scoring methods were a major cause of this inconsistency.

In a second study, the IKBCWG directly addressed the above issue by instructing laboratories to adopt a standardized prescribed scoring system. This method involved counting 250 cells at the top and bottom of the core, with observers using a “virtual” cell counter simulating a benchtop cytology cell counter, and examining each tumor cell in a “typewriter” manner, counting as positive or negative. When each of the 16 participating laboratories observed the same slides, a very high ICC of 0.94 was achieved using this standardized method. Nevertheless, upon examining individual cases, clinically significant discrepancies still existed in some cases, with critical ranges of 10% -20% identified.

In the third study by the IKBCWG, three different Ki-67 scoring methods were directly compared, acknowledging this as a more “realistic” test than the prescribed “typewriter” counting method used in previous studies. The counting methods were as follows: (a) global, counting 100 cells in four regions; (b) global weighted (including a weighted estimate of total area); (c) hotspot, counting a single field of 500 cells. Among these three methods, only the unweighted global score met the specified success criteria, with an ICC of 0.87. The significance of this finding is that high inter-observer agreement can be achieved when scoring Ki-67 on TMAs with manual region selection and without special instrumentation. In a follow-up study using whole slides instead of TMAs, the same ICC of 0.87 was found. However, in the latter two studies by the KBCWG, it was noted that there were still a small but significant number of cases showing large discrepancies between laboratories.

Does image analysis help?

Digital pathology and image analysis offer the potential to significantly improve the accuracy and reproducibility of diagnostic pathology, including the assessment of biomarkers. In the next international study conducted by the IKBCWG, the approach was similar to the first study, where laboratories were invited to assess the Ki-67 index using any technique they preferred. In this study employing image analysis, centrally immunostained slides were sent to 14 different laboratories for digital scanning and automated assessment of Ki-67 index; these laboratories used a total of 10 different image analysis programs. The experiment, using a criterion of ICC significantly above 0.8, was successful, with an average automated ICC of 0.83, much higher than the 0.71 found in earlier studies with manual scoring. Thus, the ICC for Ki-67 assessment based on non-standardized image analysis was comparable to that for Ki-67 assessment using standardized methods for visual evaluation. In fact, in a follow-up study, even better ICCs were obtained by restricting participants to use only one of three more popular image analysis systems (HALO, QuantCenter, and QuPath), with intra-platform ICCs exceeding 0.97 and inter-platform ICCs of 0.95. These IKBCWG studies strongly suggest that image analysis is easier to standardize than manual counting techniques and can yield highly reproducible platform-independent, operator-independent, and vendor-independent scores in Ki-67 assessment for breast cancer. However, it should be noted that regardless of using visual or automated scoring systems, the ICCs obtained for excised biopsies using standardized assessment methods were almost identical (Table 2).

Table 2. Summary of Ki-67 assessment reproducibility documented in IKBCWG studies

|

Antibody name

|

Product number

|

Clone number

|

Cellular localization

|

|

Ki-67

|

RMA-0731

|

MXR002

|

Nuclear

|

|

MAB-0672

|

MX006

|

Nuclear

|

References:

Gown Allen M.The Biomarker Ki-67: Promise, Potential, and Problems in Breast Cancer[J]. Applied Immunohistochemistry & Molecular Morphology, DOI:10.1097/PAI.0000000000001087.

For more information, please contact: 800-8581156 or 400-889-9853